The concept of brain-computer interfaces (BCIs) has long been the stuff of science fiction, but recent advancements in neural technology are bringing it closer to reality. Among the most groundbreaking developments are brain-computer chips—tiny, implantable devices designed to bridge the gap between human cognition and artificial systems. These chips promise to revolutionize medicine, communication, and even human augmentation, raising both excitement and ethical questions.

At the core of this technology lies the ability to decode neural signals and translate them into actionable commands. Companies like Neuralink, founded by Elon Musk, are pioneering high-bandwidth brain implants that aim to treat neurological disorders, restore mobility to paralyzed patients, and eventually enable direct communication between humans and machines. Early trials have shown promising results, with paralyzed individuals controlling computers and robotic limbs through thought alone.

How Brain-Computer Chips Work

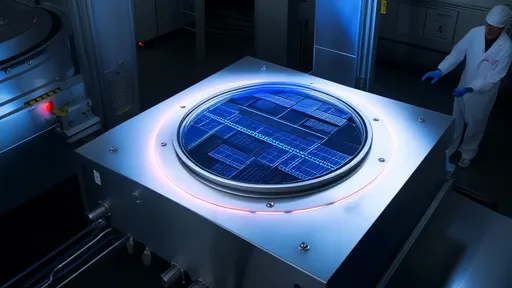

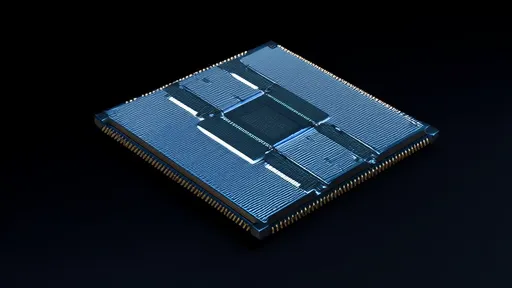

Brain-computer chips function by interfacing directly with neurons, the brain's primary signaling cells. These chips are embedded with microelectrodes that detect electrical activity in the brain, which is then processed by onboard algorithms. The data can be wirelessly transmitted to external devices, allowing for real-time interaction. For instance, a person with a spinal cord injury could use the chip to move a prosthetic arm simply by imagining the motion.

One of the biggest challenges has been achieving a stable, long-term connection between the chip and brain tissue. The brain's immune response often leads to scar tissue formation around implants, degrading signal quality over time. Researchers are experimenting with flexible, biocompatible materials to minimize this reaction. Some teams are even developing "neural lace" technology—a mesh-like electrode array that integrates seamlessly with brain tissue.

Medical Breakthroughs and Beyond

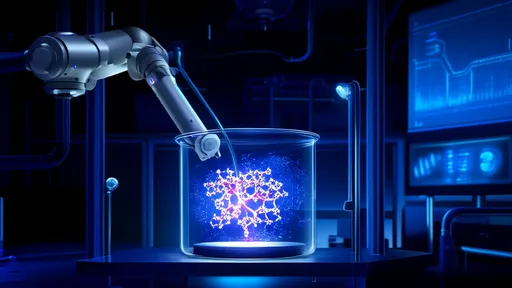

The medical applications of brain-computer chips are staggering. Beyond restoring movement to the paralyzed, these devices could treat conditions like epilepsy, Parkinson's disease, and chronic pain by modulating abnormal neural activity. In some experimental cases, chips have been used to restore partial vision to the blind by bypassing damaged optic nerves and stimulating the visual cortex directly.

However, the potential extends far beyond healthcare. Tech visionaries speculate about a future where brain chips enhance cognitive abilities, enabling instant access to information or even telepathic communication. While this may sound like dystopian fiction, companies are already exploring "memory prosthetics" to combat dementia and brain implants for augmented reality integration.

Ethical and Societal Implications

As with any transformative technology, brain-computer chips come with profound ethical dilemmas. Privacy concerns top the list—how can we ensure neural data, which may include intimate thoughts and emotions, remains secure? There are also fears of inequality, where only the wealthy can afford cognitive enhancements, creating a new class divide. Regulatory bodies are scrambling to establish guidelines, but the pace of innovation often outstrips policy development.

Another critical debate centers on identity and autonomy. If a chip can influence or override neural processes, to what extent does it alter a person's sense of self? Philosophers and neuroscientists alike warn against underestimating the psychological impact of merging silicon with consciousness. Public acceptance remains a hurdle, with many wary of the idea of "hacking" the human brain.

The Road Ahead

Despite the challenges, investment in brain-computer chip technology continues to surge. Military agencies see potential for superhuman soldiers, while educators dream of accelerated learning through direct knowledge uploads. Meanwhile, the gaming industry envisions fully immersive experiences controlled purely by thought. The next decade will likely see the first commercial applications, starting with medical devices before expanding into consumer markets.

What makes this field unique is its interdisciplinary nature—neuroscience, materials engineering, AI, and ethics must all converge to make brain-computer interfaces viable. As research progresses, society will need to grapple with fundamental questions about what it means to be human in an age where minds can merge with machines. One thing is certain: the development of brain-computer chips marks a pivotal moment in our technological evolution, with implications that will resonate for generations to come.

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025

By /Aug 15, 2025